About Browse AI

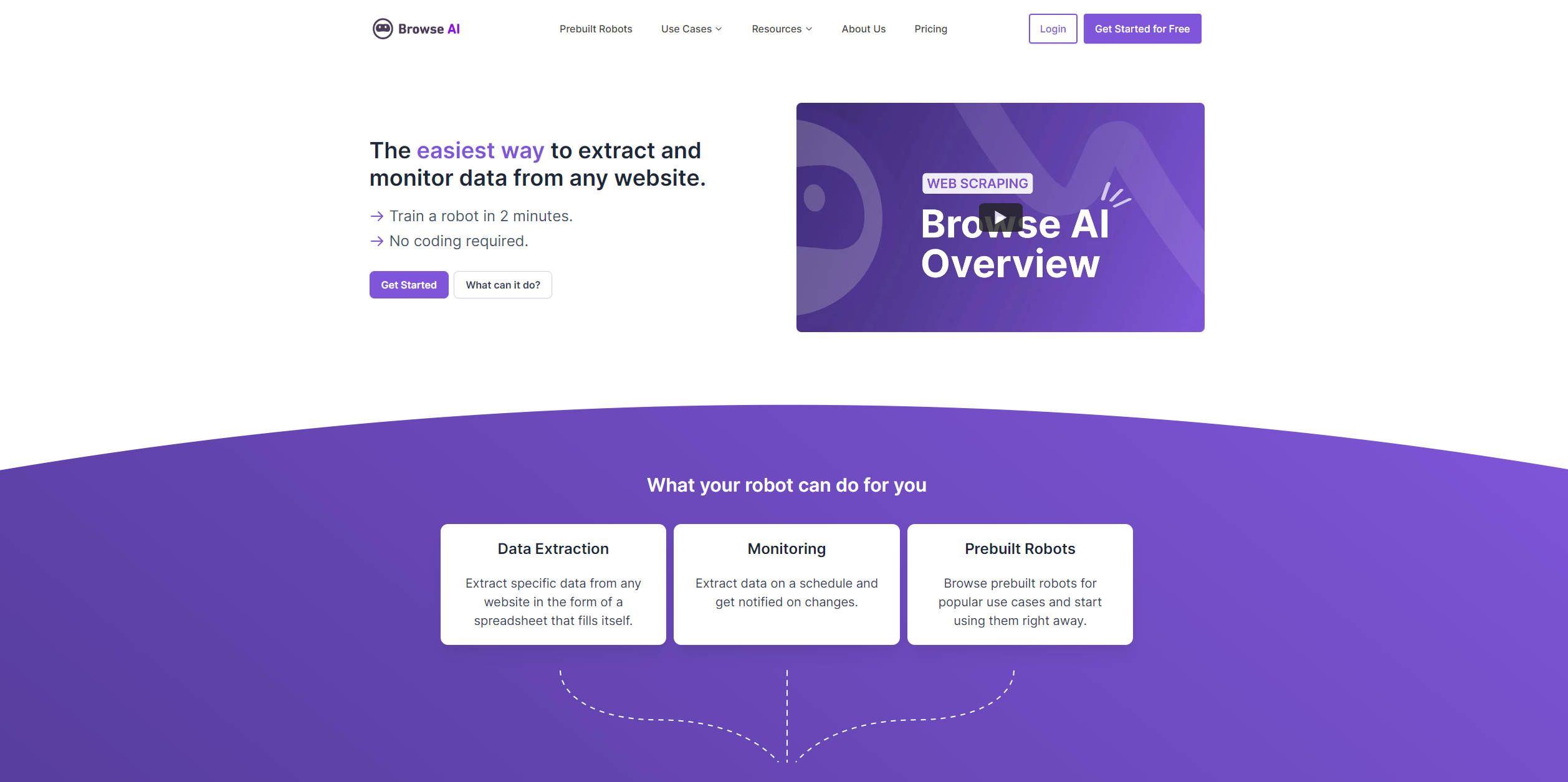

Browse AI is a cutting-edge tool designed to simplify the process of extracting and monitoring data from websites without the need for coding skills. The platform allows users to create "robots" in minutes that can perform tasks such as data extraction, change monitoring, and even emulate user interactions on a schedule. Catering to a diverse range of individuals and professionals, Browse AI serves as a powerful asset for those aiming to save time, cut costs, and enhance efficiency in data-related processes.

Key Features

- No-Code Data Extraction: Enables users to scrape structured data from websites without writing a single line of code.

- Automated Monitoring: Set up scheduled monitoring of websites and receive notifications when changes occur.

- Prebuilt Robots: Offers a collection of ready-to-use robots for common use cases, facilitating immediate deployment.

- Global Data Reach: Capable of extracting location-based data from websites around the world.

- Workflow Orchestration: Users can manage and coordinate multiple robots to automate complex tasks.

- Adaptive Technology: Browse AI robots can automatically adjust to changes in website layouts to maintain data extraction accuracy.

Pros & Cons

Pros

- Time-Saving: Automates repetitive tasks, significantly reducing the time spent on data collection and monitoring.

- User-Friendly: The intuitive interface makes it easy for non-technical users to set up and operate robots.

- Scalability: Capable of bulk running up to 50,000 robots at once, making it suitable for large-scale operations.

- Integration-Friendly: Offers compatibility with over 7,000 applications, expanding its utility across various platforms.

Cons

- Learning Curve: New users may need some time to fully understand how to best utilize all the features available.

- Dependency on Website Structure: Changes in the structure of target websites may require adjustments to the robots.