About EverMemOS

Advertiser Disclosure: Futurepedia.io is committed to rigorous editorial standards to provide our users with accurate and helpful content. To keep our site free, we may receive compensation when you click some links on our site.

Key Features

- Four-Layer Memory Design: Separates agent behavior, long term storage, indexing, and integration, so teams can drop EverMemOS in as a shared memory backbone across multiple agents and applications.

Pros & Cons

Pros

- True Long-Term Consistency: Helps agents maintain identity and context across days or months, instead of forgetting what the user said ten messages ago.

- Open Source and Enterprise Ready: Apache 2.0 licensing and a transparent GitHub codebase suit security-conscious teams that want on-prem or VPC deployments.

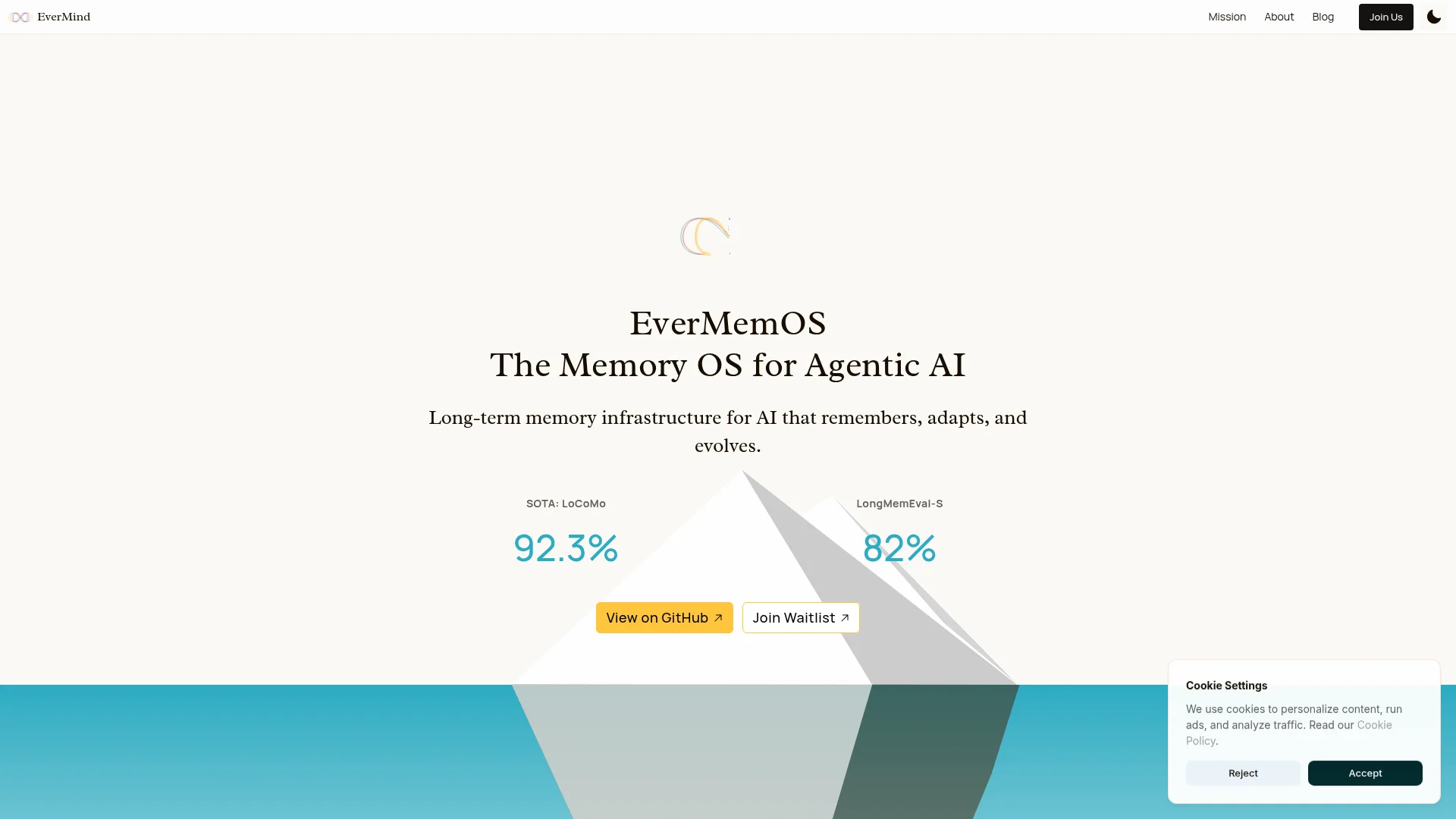

- Serious Benchmark Credentials: Strong results on LoCoMo and LongMemEval-S give technical buyers evidence that the memory system holds up under pressure, not just in demos.

- Rich Retrieval Modes: From ultra fast BM25-only recall to multi round LLM-based retrieval, teams can tune latency, cost, and quality for each use case.

- Good Getting-Started Experience: Quickstart scripts, sample data, and interactive chat demos make it practical to see the whole memory loop working in under an hour.

Cons

- Nontrivial Infrastructure Footprint: Requires Docker plus MongoDB, Elasticsearch, Milvus, and Redis, which can feel heavy for small teams or hobby projects.

- Early Ecosystem: Although maturing quickly, it still has fewer out-of-the-box integrations than established search or vector stores.

- External LLM Dependency for Advanced Modes: Agentic retrieval relies on third party LLM APIs, so costs and latency depend on whichever model provider a team chooses.